Adaptive Sync Technologies Explained

The 101 on Adaptive Sync / G-Sync / FreeSync

To understand technologies like FreeSync and G-Sync as a technology I will repeat and explain this at least a couple of times. Here's the thing, your games are not rendered at a fixed, consistent frame rate. The graphics card is always firing off frames towards your monitor as fast as it can possibly do frame for frame. As such, these frames are dynamic and can easily bounce from say 30 to 80 FPS in a matter of a split second depending on variables like 3D scene, polygon count, texture quality, user interaction, effects shaders kicking in, etc. On the eye side of things, you have this hardware which is your monitor, and it is a fixed refresh rate device as it refreshes at, let's say, 60 Hz (the monitor refreshes and fires off 60 frames at you with each second that passes).

Fixed & Dynamic Collides

Fixed and dynamic are two different things, they collide with each other. So on one end we have the graphics card rendering at a varying frame rate while the monitor wants to show 60 images per second in a timed and sequential interval (I'll use 60 Hz screens as an example). That causes a problem as, with a slower (or faster) FPS than 60, simply put you'll get multiple rendered frames displayed on the monitor with each refresh of the monitor. Graphics cards aren't that good at rendering at fixed speeds, so the industry tried to solve that with VSYNC on or, alternatively, off. Up-to now we solved problems like vsync stutter or tearing basically in two ways. The first way is to simply ignore the refresh rate of the monitor altogether, and update the image being scanned to the display in mid cycle. This, you guys all know and have learned as "VSync Off Mode", and is the default way most FPS gamers play. If you however freeze the display to 1 Hz, this is what you will see, the eptome of graphics rendering evil, screen-tearing. When a single refresh cycle (1/60th Hz on your monitor) shows 2 or more images, a very obvious tear line is visible at the break, yup, that is what we all refer to as screen-tearing -- and each and everyone of you have seen it many times. You can solve tearing though by turning VSYNC ON, but that does create a new problem. See, delaying the graphics card creates an effect as that delay causes stutter whenever the GPU frame rate is below the display refresh rate. There's another nasty side effect, mouse LAG. How many of you guys have been irritated by incorrect and laggy mouse input during gaming? Yes, VSYNC delays the rendered GPU frames and the difference in-between the two is responsible for the actual lag that you are experiencing. So it increases frame latency, which is the direct result of what you guys know and hate -- input lag, the visible delay between a button being pressed and the result occurring on-screen.

How Do We Fix This?

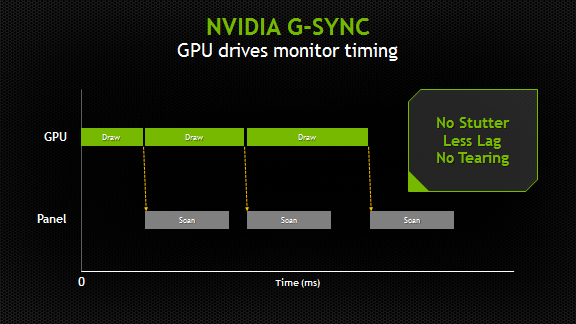

Each time your graphics card has rendered one frame (one draw) that frame is aligned with the monitor refresh rate (scan). So the refresh rate of the monitor has become dynamic and is now synchronized with the GPU. With FreeSync, Adaptive Sync or G-Sync the monitor will become a slave to your graphics card as its refresh rate in Hz becomes dynamic. Yes, it is no longer functioning at a static refresh rate of 60 Hz.

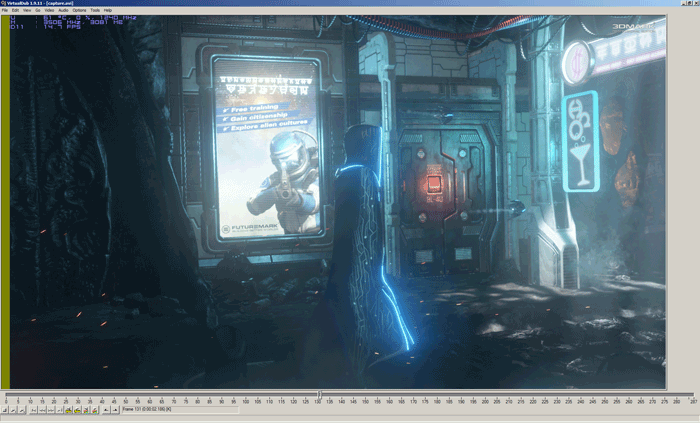

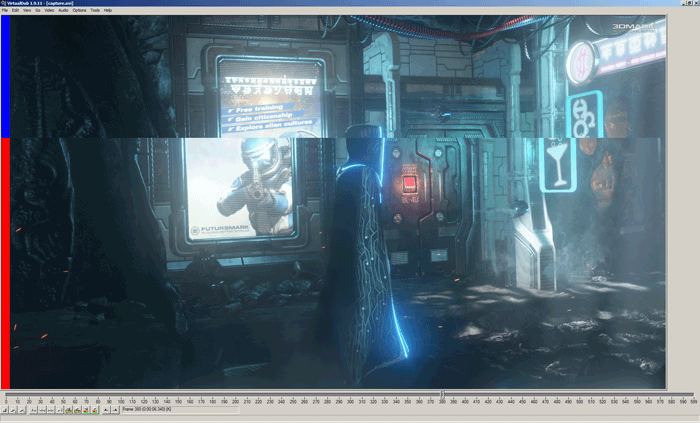

Look at the upper and lower part, that is screen-tearing - this is FCAT btw - we color each rendered frame with a color bar for analysis.

With both the graphics card and monitor both dynamically and totally synchronized you have effectively eliminated three things; stutter, input lag and screen-tearing. It gets even better; without stutter and screen-tearing on a nice IPS LCD panel even at say 35 Hz, you'd be having a pretty good gaming experience (visually) as the perception of a smoothly rendered game is just so much higher.