Graphics card performance 1080p

DirectX 11 or 12?

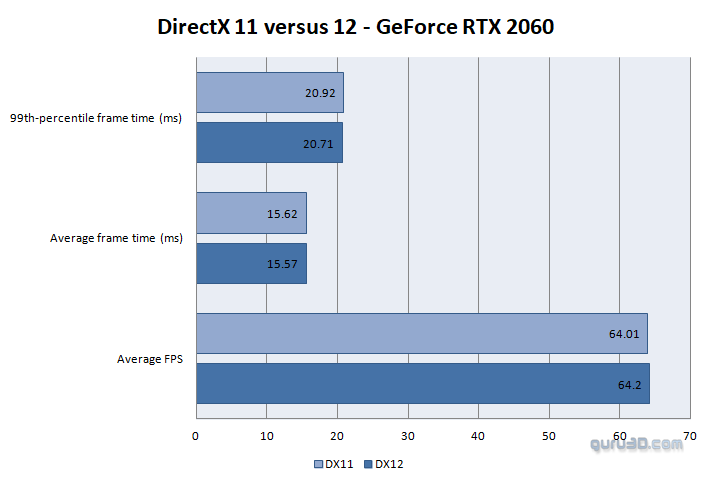

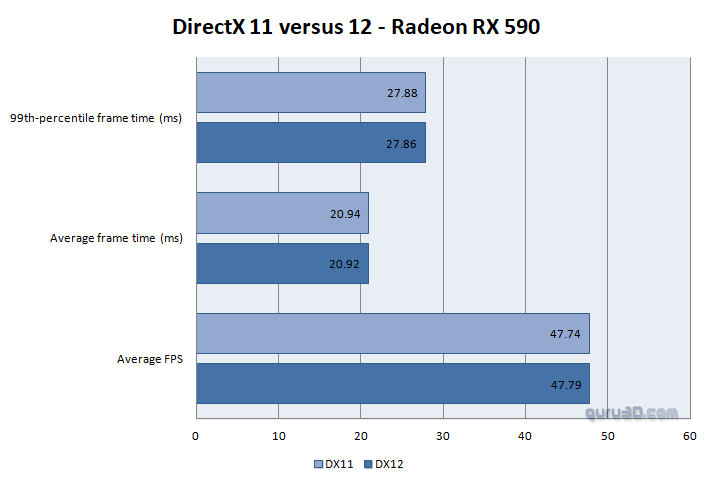

We've spilled the beans already on the previous page. We started our testing at DirectX 12 as we assumed the game works best that way. We did check that, below a plot of a GeForce RTX 2060and Radeon RX 590 at Full HD on the older Core i7 5960X test platform (which should be a little more CPU bound (and that should show more easily in DX12)).

With no performance difference, we'll opt to use DX12 for all our regular testing, purely for the sole reason that DX-R will require DX12. As you can see (below) the difference in a 30-second time demo recording is close to NIL in performance.

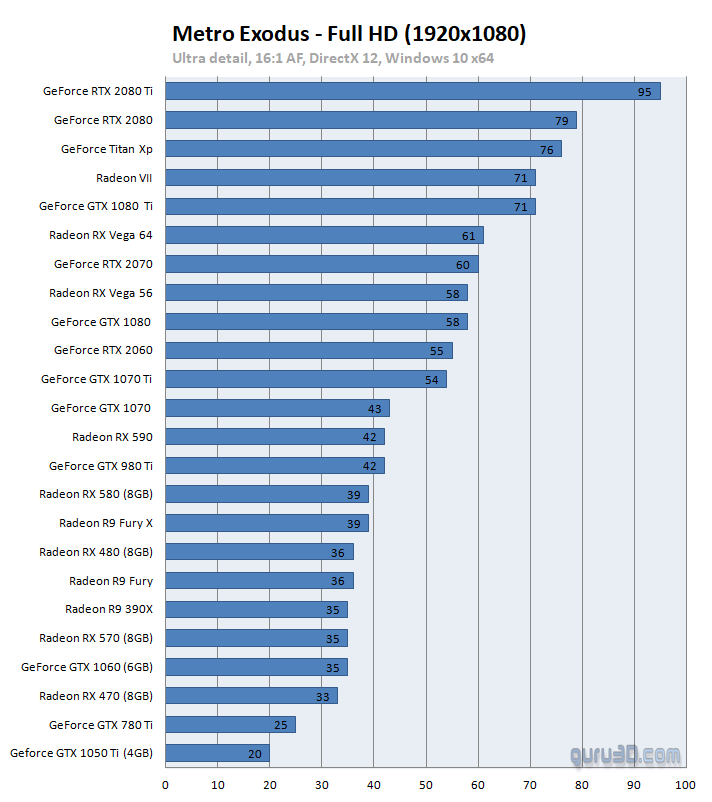

Graphics card performance

Full HD performance

Ultra Settings / RTX off / DLSS off / Hairworks Off / Advanced Physics on

The type of game you play is always relevant though, a first-person shooter game is nice at 50 to 60 fps, an online shooter on a 144Hz monitor feels better at 100+ fps. And totally on the opposing side, for RPG gaming things are different for which we are comfortable with an FPS ranging as low as 30~35 FPS. For race-games I feel a minimum of 40 FPS average would be a good point to start. At all times if your framerate is low, you can opt to change in-game image quality settings. Mind you that we test with reference cards or cards that have been clocked at reference frequencies. Factory tweaked graphics cards obviously can run up to 20% faster. But for the generic overview, we treat all cards the same.

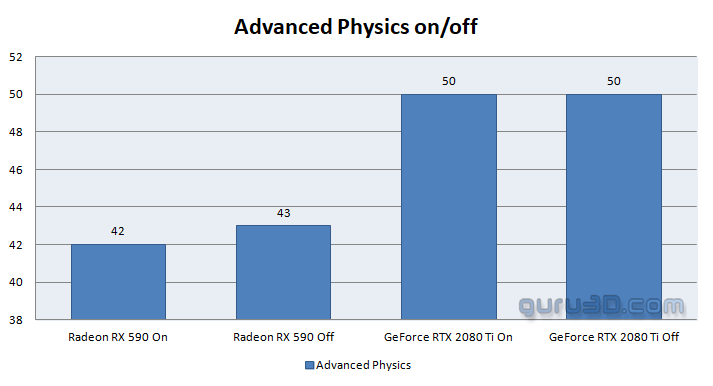

By the way - for those that wonder if NVIDIA is performing some weird trickery with Advanced Physics. Enabling it shows close to NIL of a difference in performance. Ergo, we opted that feature to be enabled.