DX11 - Frametime performance

Frame Based Game Experience (stutter) Analysis

Lately there have been some new measurement introduced, latency measurements or what i like to call frame experience measurements. When you record a certain number of seconds of a recording whilst tracking the number of frames within a set time, then output that in a graph and then zoom in, then you can see the turnaround time for the GPU. Basically the time it takes to render one frame can be monitored and tagged with a number, this is latency. One frame can take say 17ms. A colleague website discovered a while ago that there were some latency discrepancies in-between NVIDIA and AMD graphics cards with results being worse for AMD, especially in multi-GPU solutions. Now FRAPS might not be the best tool to emasure this with, but it's indicative enough to use it. There is another problem with latency measurements, the vast majority of people doesn't like it, or simply do not understand it. Our forum reader base seems to really love this measurement, however when I asked some generic end users they just simply do not have a clue on how to interpret the charts based on latency measurements.

What do these measurements show ?

But basically what these measurements show are anomalies like small glitches and stutters that you can sometimes (and please do read that well, sometimes) see on screen. Below I like to run through a couple of titles with you. Mind you that Average FPS matters more then frametime measurements. It's just an additional page or two of information that from now on we'll be serving you.

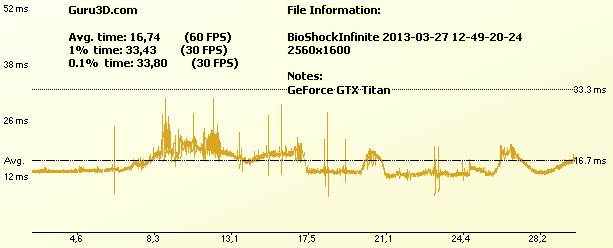

Above GeForce GTX Titan

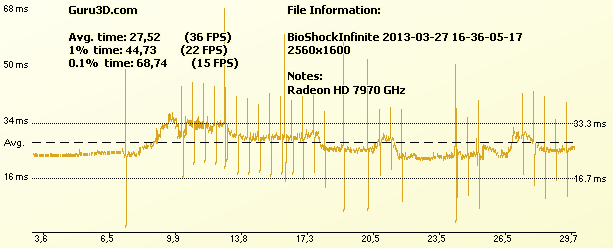

Above Radeon HD 7970 GHz (Hyper-threading off)

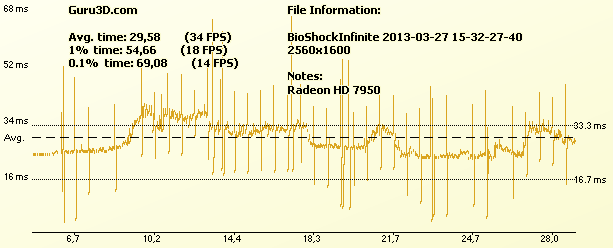

Above Radeon HD 7950 (Hyper-threading on)

So what are we looking at ? Above we recorded 30 seconds of the Bioshock Infinite benchmark. The upper chart shows GeForce GTX Titan, the lower ones the Radeon HD 7970 GHZ (with Hyper-threading disabled) and then a R7950 with HT enabled). There was some discussion that this measurement with hyper-threading enabled would result into more stutters. Hence we included a test with HT disabled. That apparently really doesn't make much of a difference. So each frame is recorded and FRAPS outs as Latency, e.g. the time it takes to render one frame. Now if the GPU has an issue or if there is something going on in the PC, the stuff that you see on screen could results in a stutter or glitch. Now in these charts if you see a spike, then the latency to draw a frame or multiple frames will go up dramatically. Also if a card is rendering slower, latency obviously will go up as it takes more time to render a frame. The reality is that spikes from say 30ms up to 40ms you probably will not even notice, 50ms and above start becoming troublesome and can be considered a low framerate, or glitch, stutter.

That effect translates to what you see on your monitor. A spike could mean a glitch or stutter that you see on your monitor for a fraction for a second (or repeatedly). For NVIDIA we see an absolutely flawless and smooth result, AMD has a little bit more going on. Still, it's hard to detect on the monitor alright.

Multi_GPU

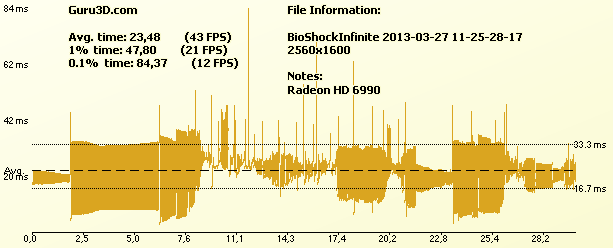

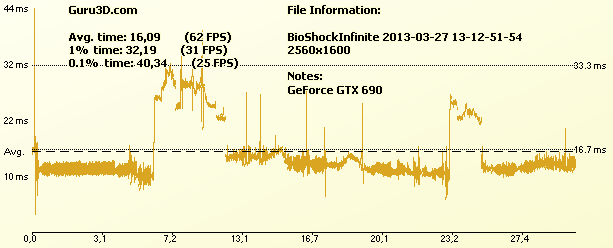

Above GeForce GTX 690 (2 GPU)

I probably will stop using FRAPS with multi-GPU cards completely, there's a lot going on at AMDs side that is too hard too explain really. I included the results based on the fact that you guys want to see these. But for multi-GPU really, my advise is to ignore this. In the future we'll move towards FCAT benchmarking which will show a lot more in-depth and more reliable.