RT cores and HW accelerated raytracing

RT cores and hardware-assisted raytracing

NVIDIA is adding 72 RT cores on its full Turing GPU. By utilizing these, developers can apply something that I just referred to as hybrid raytracing. You still are performing your shaded (rasterization) rendering, however, developers can apply real-time ray traced environmental functionality like reflections and refractions of light onto objects. Think of sea, waves, and water reflecting precise and accurate world reflections and lights. You can also think of a fire or explosion, bouncing light off walls and reflecting in the water. It's not just reflections bouncing the right light rays but also the other way around, shadows. Where there's light there should be a shadow, 100% accurate soft shadows can now be computed. It has been really hard in the traditional shading engine to create accurate and proper shadows, this is now also something possible with Raytracing. Also, raytraced ambient occlusion and global illumination are something that is going to be a big thing. Never have these things been possible as raytracing is all about achieving realism in your game.

Please do watch the above video

Now, I can write up three thousand words and still you'd be confused as to what you can achieve with raytracing in a game scene. Ergo I like to invite you to look at the video above that I recorded at a recent NVIDIA event. It's recorded by hand with a smartphone, but even in this quality, you can easily see how impressive the technology is by looking at several use-case examples that can be applied to games. Allow me to rephrase that, try to extrapolate the different RT technologies used, and imagine them in a game, as that is the ultimate goal we're trying to achieve here.

So how does DX-R work?

Normally lights or bounced, reflected, refracted, etc light rays hit an object, right? Let's take the position you are sitting and look around you. Anything you can see is based on light rays. That's colors and lights bouncing off each other and of all objects. Thinking about what and more importantly how you are seeing things inside your room right there already is complicated. But basically, if you take a light source, that source will transmit light rays that bounce of the object you are looking at.

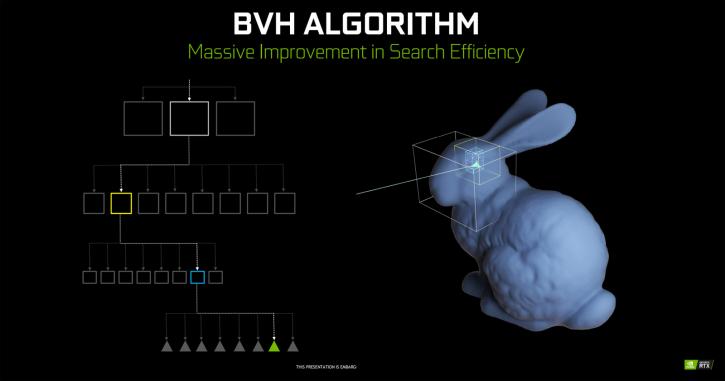

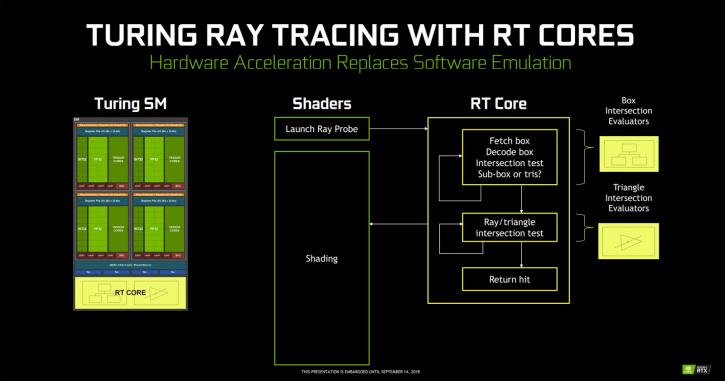

Well, DXR is reversing that process. By applying a technology called BVH = “Bounding Volume Hierarchy”. DirectX Ray tracing is using an algorithm where an object is divided in boxes (the resolution/number of boxes for more precision can be programmed by the developer). So boxes and even more boxes until it hits triangles. The fundamental idea is that not all triangles get or need to be raytraced, but only a certain amount of (multitude) boxes. The architecture in the RT core basically has two fundamental functions, one checks boxes, the other triangles. It's kind of working like a shader for rasterization code but specifically for rays. Once the developer has decided what level of boxes on a certain object is applied, that (and each) box will fire off a ray into the scene. And that ray will bounce and hit other objects, lights and so on. Once that data is processed you have shading going in, deep learning and ray tracing. NVIDIA is doing roughly 50-50 shading and raytracing on an RT enhanced scene.

So how does that translate into games? The best example is to show you my (again recorded with a smartphone) Battlefield 5 recording. While in early alpha stages, you will likely be impressed as to what is happening here as explained really nicely by fellow guru pSXAuthor in the forums. What basically is shown here is the difference between screen space reflections (SSR) and raytraced reflections. SSR works by approximating raytracing in screen space. For each and every pixel on a reflective surface a ray is fired out in and the camera's depth and colour buffers are used to perform an approximation to raytracing (you can imagine drawing a line in 3D in the direction of the reflected light, reading the depth of each pixel until the line disappears behind another surface - nominally this will be the reflected point if the intersection point is close to the line - otherwise it is probably an occlusion - doing it efficiently and robustly is a little more involved than this but this explanation is very close to the reality). This approximation can be quite accurate in simple cases, however, because the camera's image is being used: no back facing surfaces can ever be reflected (you will never see your face in a puddle!), nothing off screen can be reflected, and geometry behind other geometry from the point of view of the camera can never be reflected. This last point explains why the gun in an FPS causes areas in the reflections to be hidden - the reason is very simple: those pixels should be reflecting something which is underneath the gun in the camera's 2D image. Also, this explains why the explosion is not visible in the car door: it is out of shot. Actually: it also appears that the BF engine does not reflect the particle effects anyway (probably reflection is done earlier in the pipeline than the particles are drawn). Using real raytracing for reflection avoids all of these issues (at the cost of doing full raytracing obviously), that process is managed over the RT cores running Microsoft's DXR.

Microsoft can count on cooperation from the software houses. The two largest publicly available game engines, Unreal Engine 4 and Unity, will, for example, support it. EA is also using its Frostbite and Seed engines to implement DXR.

The vast majority of the market is therefore already covered. So the functionality of RT cores / RTX will find its way towards game applications and engines like Unreal Engine, Unity, Frostbite... they have also teamed up with game developers like EA, Remedy, and 4A Games. The biggest titles this year, of course, will be the new Tomb Raider and Battlefield 5. Summing things up, DXR, or ray tracing in games as a technology, is a game changer. How it turns out on Turing for actual processing power, remains to be seen until thoroughly tested.