Graphics card performance 1080p

Graphics card performance

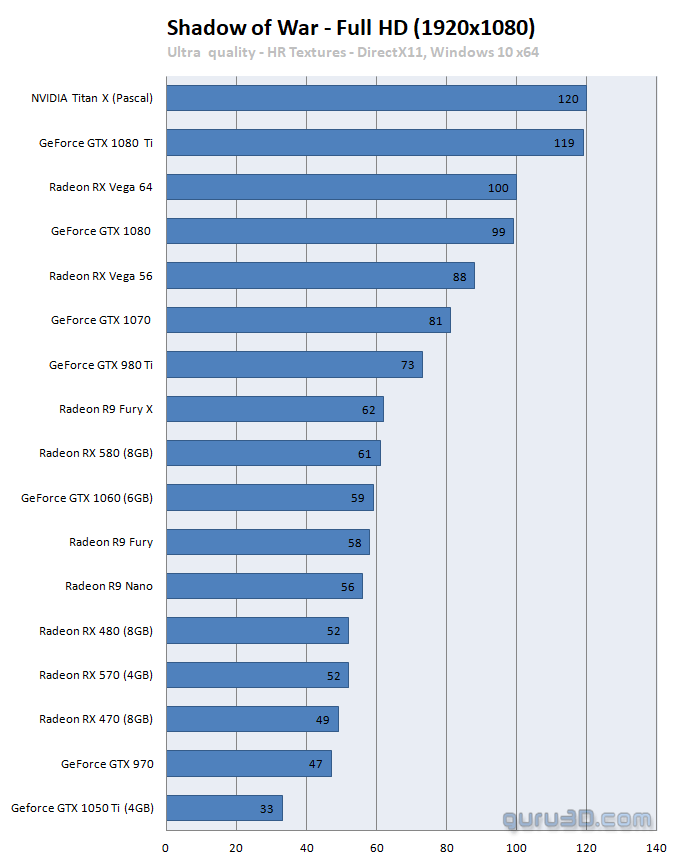

The game offers several quality settings modes up-to Ultra. Considering at 1080p even entry level to mainstream graphics cards achieve good frame-rates at Ultra settings, this will be the quality settings used. There is little to no difference in-between the Very High and Ultra quality mode other than the Ultra Textures (DLC). We use this specific mode as hey, you are playing games on a PC and that is all about the PC experience, proper image quality.

One more note on this, you can argue whether or not the UHD textures have an effect at 1080p. More pixels is however better if you have the horsepower for it. Heck, if you play games at 1080p with a DRS/VSR coming from UHD, you also see a difference right? So considering the UHD Texture pack has little effect on actual and overall performance, I'd say install them.

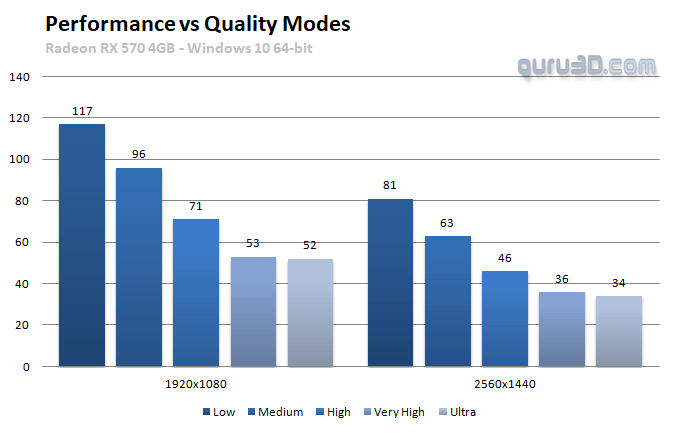

In the above chart you can see the differences in performance in-between the five primary quality modes versus Full HD (1920x1080) and WQHD (2560x1440). Should you need to drop a quality mode performance wise, HIGH quality mode makes an effective difference should you need the extra perf. Low and medium quality modes are just not worth the image quality or performance benefit imho (unless you use an IGP or something).

The type of game you play is always relevant though, an first person shooter game is nice at 50 to 60 fps, an on-line shooter on a 144Hz monitor feels better at 100+ fps. And totally on the opposing side, for RPG gaming things are different for which we are comfortable with an FPS ranging as low as 30~35 FPS. For race-games I feel a minimum of 40 FPS average would be a good point to start. At all times if your framerate is low, you can opt to change in-game image quality settings. Mind you that we test with reference cards or cards that have been clocked at reference frequencies. Factory tweaked graphics cards obviously can run up-to 20% faster. But for the generic overview, we treat all cards equal.