Page 3

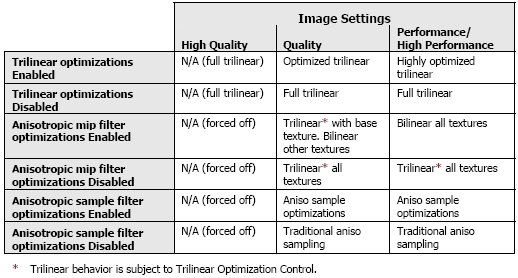

Legit Driver OptimizationsIn recent builds of NVIDIA ForceWare drivers we can see that NVIDIA introduced selectable optimizations. Recent drivers, and in fact the drivers supplied with this 6600GT, show even more options in optimizations. Let's have a look at them:

These are generic and not application specific optimizations. The image quality loss is hardly noticeable with the naked eye yet they offer you a slight performance increase. The optimizations are user selectable from the graphics card's control panel. You decide whether you want them enabled or not. These optimizations are now enabled by default. We here at Guru3D.com currently run the default test with Trilinear optimizations enabled and the rest disabled. More on that in our benchmarks.

We are not going in depth on the optimizations, yet I do want to show you that there indeed are very tiny differences in image quality when you make use of generic optimizations. Let's have a look at the image below.

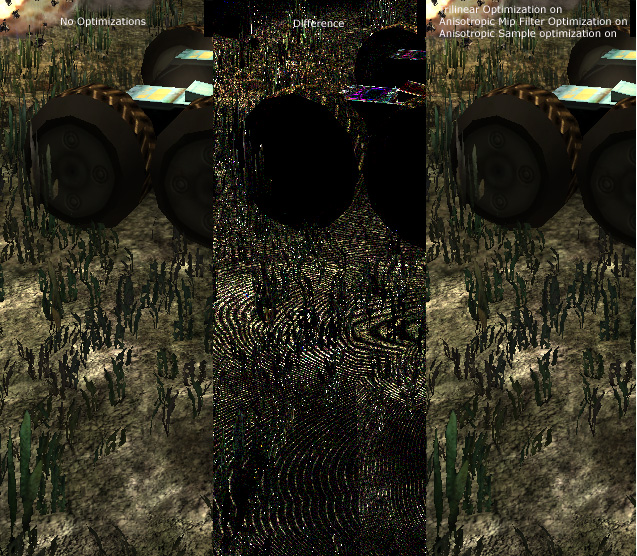

Difference (brightness on the middle image is 3 times enhanced)

Now then, the left image is a partial screenshot from Aquamark 3 at 1280x1024 with 16 levels of Anisotropic filtering with all Optimizations disabled. To your right you'll see the same thing with all optimization enabled. Now ignore the middle picture for a while and try to detect some differences. Also keep in mind that an actual game or benchmark of whatever is doing that at high framerates, you are now looking at a still image in the game. Let's do that again yet place the camera position into a better perspective and deeper view:

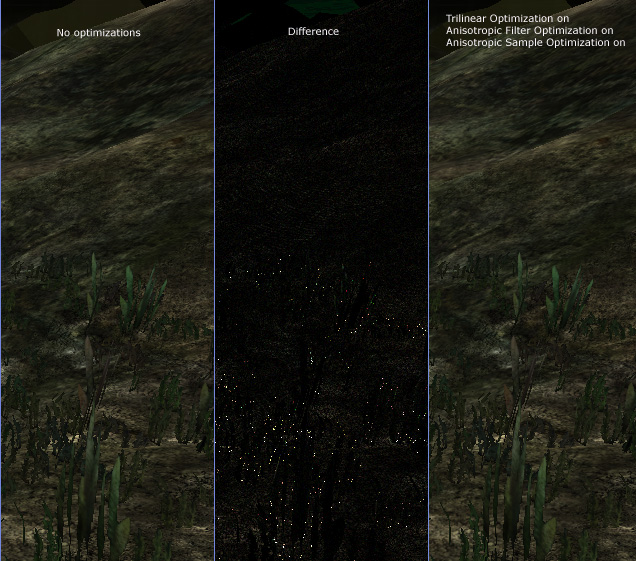

difference - brightness default

Difficult to detect the difference huh? Now in the middle picture we see a program called The Compressonator (funnily enough an ATI application) at work. Basically it compares two images and spots the differences. For the top images I had to increase the difference brightness 3 times to show the difference a bit better so you know what exactly to look for. The second one shows the difference with the Compressonator's default view. The dots highlightes show the area with the most differences. I know this is just one application and image comparisons can go on and on for pages and eventually you will find differences if you look hard enough.

The quantity of optimizations is slowly irritating me a little though. It's getting very confusing for the end-user and an extremely pain in the you know what for us reviewers. Don't get me wrong here, I praise NVIDIA for the option to make a selectable difference for full trilinear filtering and I truly wish that ATI would follow that trend, but this is a little over the top isn't it? For the true performance tweakers among us, this is l33t stuff though.

Anyway, draw your own conclusions.

Power Supply demand

NVIDIA recommends a 300 Watt Power Supply for the 6600 series, so basically anyone has that minimal in their system these days.

Architecture Characteristics of the GeForce 6 Series

- Pixel pipelines: 16 / 12 / 8

- Superscalar shader: Yes

- Pixel shader operations/pixel: 8

- Pixel shader operations/clock: 128

- Pixel shader precision: 32 bits

- Single texture pixels/clock: 16

- Dual texture pixels/clock: 8

- Adaptive Anisotropic Filtering: Yes

- Z-stencil pixels/clock: 32

Let's talk and compare a little. The GeForce 6600 series was manufactured at 0.11µ where I expected it to be 0.13 µ!

Get ready for the most vibrant, lifelike, and elegant graphics ever experienced on a PC. The groundbreaking NVIDIA® GeForce 6 Series of graphics processing units (GPUs) and their revolutionary technologies power worlds where reality and fantasy meet; worlds in which new standards are set for performance, visual quality, realism, and video functionality. The GeForce 6 Series GPUs deliver powerful, elegant graphics to drench your senses, immersing you in unparalleled worlds of visual effects for the ultimate PC experience.

| Specs | GeForce 6600 | GeForce 6600 GT | GeForce 6800 | GeForce 6800 GT | GeForce 6800 Ultra |

| Codename | NV43 | NV43 | NV40 | NV40GT | NV40U |

| Transistors | ? | ? | 222 million | ||

| Process, GPU maker | 110nm | 110nm | 130nm, IBM | ||

| Core clock | 300 MHz | 500 MHz | Up to 400 MHz | 350MHz | 400-450 MHz |

| Memory | 128MB DDR1 | 128MB GDDR3 | 128MB DDR1 | 256MB GDDR3 | 256MB GDDR3 |

| Memory bus | 128-bit | 256-bit | |||

| Memory clock | Up to manufacturer | 2x500 MHz | 2 x 550MHz | 2 x 500MHz | 2 x 600MHz |

| PCB | P212 | P212 | P2?? | P210 | P210 |

| Pipelines | 8 | 8 | 12 | 16 | 16 |

| FP operations | FP16, FP32 | ||||

| DirectX | DirectX 9.0c | ||||

| Pixel shaders | PS 3.0 | ||||

| Vertex shaders | VS 3.0 | ||||

| OpenGL | 1.5+ (2.0) | ||||

| Price | $150 | $229 | $299 | $399 | $499 |

| Availability | Oct 2004 | Sep/Oct 2004 | May/June 2004 | ||