FCAT Frametime analysis

Frametime and latency performance

With FCAT we will look into Frame Experience Analysis. Basically with the charts shown we are trying to show you graphics anomalies like stutters and glitches in a plotted chart. Lately there has been a new measurement introduced, latency measurements. Basically it is the opposite of FPS.

- FPS mostly measures performance, the number of frames rendered per passing second.

- Frametime AKA Frame Experience recordings mostly measures and exposes anomalies - here we look at how long it takes to render one frame. Measure that chronologically and you can see anomalies like peaks and dips in a plotted chart, indicating something could be off.

| Frame time in milliseconds |

FPS |

| 8.3 | 120 |

| 15 | 66 |

| 20 | 50 |

| 25 | 40 |

| 30 | 33 |

| 50 | 20 |

| 70 | 14 |

We have a detailed article (read here) on the new FCAT methodology used, and it also explains why we do not use FRAPS anymore. Frametime - Basically the time it takes to render one frame can be monitored and tagged with a number, this is latency. One frame can take say 17 ms. Higher latency can indicate a slow framerate, and weird latency spikes indicate a stutter, jitter, twitches; basically anomalies that are visible on your monitor.

What Do These Measurements Show?

What these measurements show are anomalies like small glitches and stutters that you can sometimes (and please do read that well, sometimes) see on screen. Below I'd like to run through a couple of titles with you. Bear in mind that Average FPS often matters more than frametime measurements.

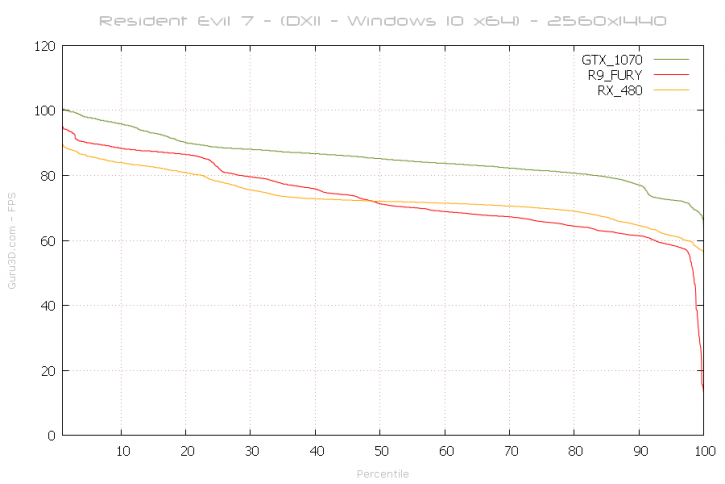

Above an FCAT plot of latency relative to FPS in percentiles. We use the Geforce GTX 1070, the Radeon R9 Fury and the Radeon RX 480. Now we stated already that the RX 480 is pretty much flying, as you can see it is as fast as the Fury, which is a factory tweaked ASUS card.

I often get asked the question why we do not include the faster Fury X here, well FCAT needs a DVI monitor output, and AMD is not implementing them any more on their reference products. Only board partner cards release DVI enabled products. Hence the R9 Fury we use is the STRIX from ASUS, as it has a proper DVI output connector. So at 50% you could consider to be the average frame-rate. The cards are nice and close and cuddly to another. The plot is based on the first 31 seconds measured in the benchmark.

Frame Pacing / Frametime

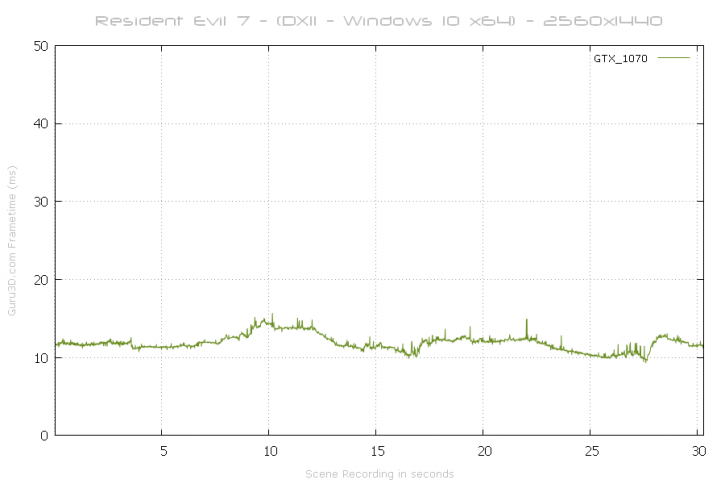

Above a the frame-time results plot of the test run @ 2560x1440 (WQHD) performed with a 469 USD GeForce GTX 1070 in 2560x1440 (WQHD). That's very nice rendering really.

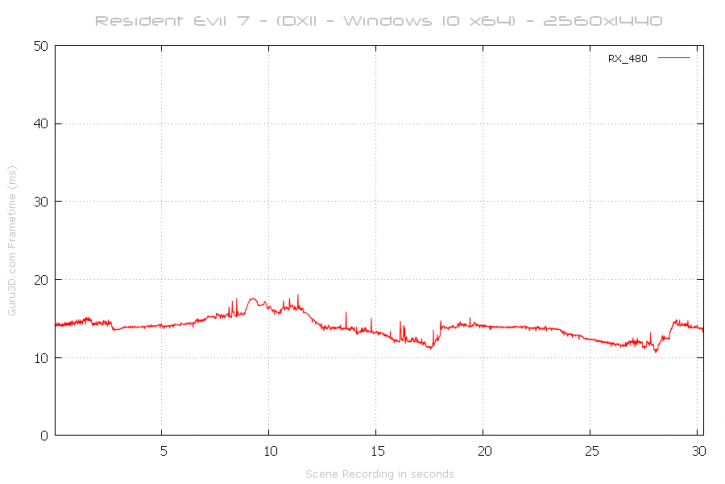

Now we look at the Radeon RX 480 8GB - as you can see, extremely smooth. That is just very impressive for a 239 USD card !

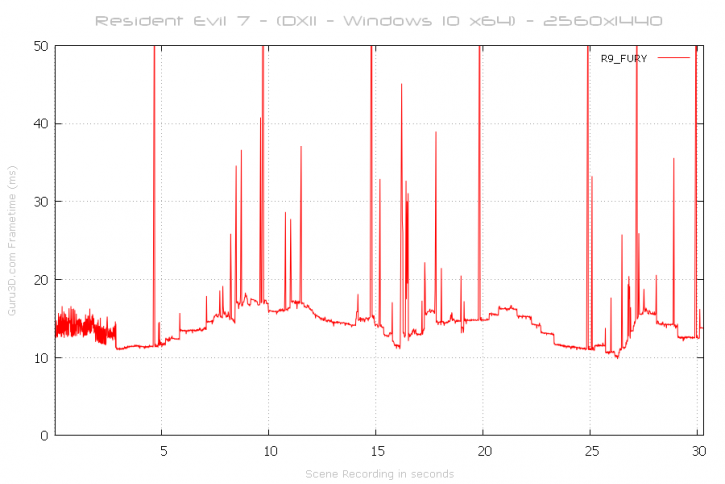

And then above the R9 Fury 4GB. Many spikes and stutters (visible). This is what is causing the weird FPS offsets in the charts. At this point I feel it might be VRAM, e.g. 4GB on the Fury is not enough, the game loads some textures or caches and boom a stutter. We think 6 GB might be the sweetspot for the game.

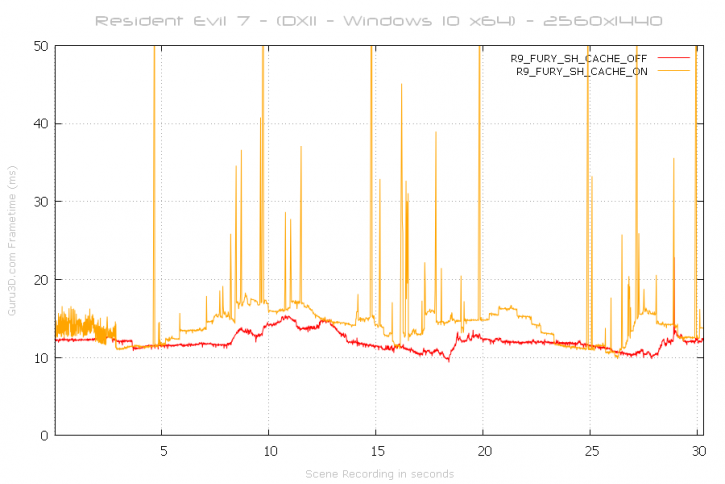

Update: Now let's try what we have been plastering throughout our article after numerous updates, we'll enable and disable the dreaded Shadow Cache on this 4GB card:

So above clearly you can see the culprit. The Shadow Cache preset is great for 6GB and 8GB cards, but not so much for 4GB or less. You'll definitely will want to disable the cache options as you'll go from stuttery to totally smooth.

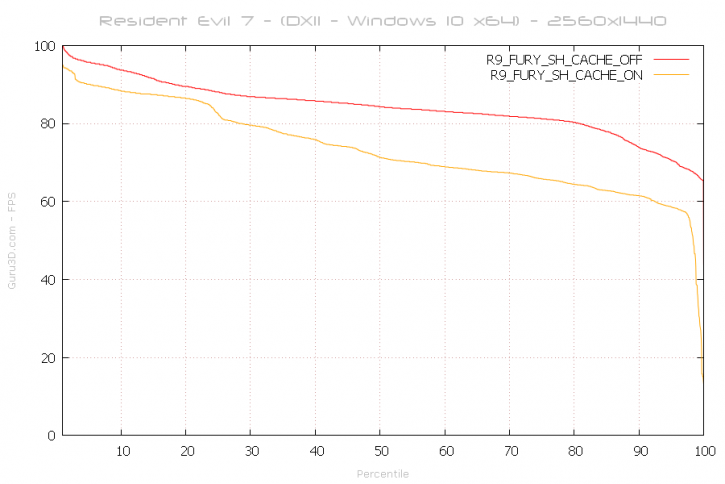

When take the plot results for the two modes on the Fury and transition them towards relative FPS, yes ... that was causing the massive drop-off in performance. As you can see at the 50% you'd have your average framerate. With Shadow Cache on that would be say 70 FPS. With Shadow cache off we are passing 80 FPS. Also look at the end of that orange line, a huge drop-off, that's low framerates as a results of the stutters.

So in short, got 4GB or less ? Definitely leave shadow cache off. Got 6GB or more ? Leave it enabled as there it'll give you a nice boost.