Power efficiency - Dyn Clock Adjustment

Power efficiency

Moving towards a 28nm based chip means you can do more with less as the product becomes smaller, the GK104 GPU comes with roughly 3.54 billion transistors embedded into it. The TDP (maximum board power) sits under a very respectable 170~200 Watts. So in one hand you have a graphics card that uses less power, yet offers more performance. That's always a big win!

The GeForce GTX 680 Classified comes with two 8-pin power connectors to get enough current and a little spare for overclocking. We'll measure all that later on in the article but directly related is the following chapter.

Dynamic Clock Adjustment technology

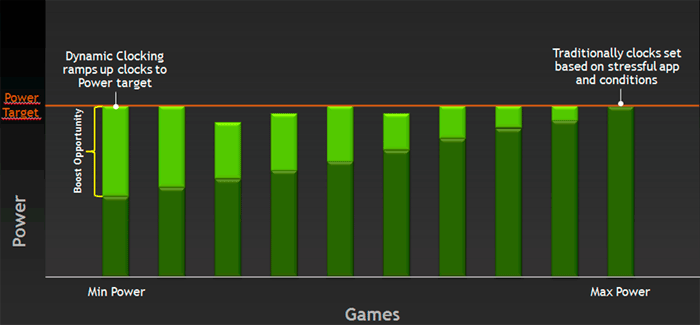

Kepler GPU will feature a Dynamic Clock Adjustment technology and we can explain it easily without any complexity. Typically when your graphics card idles the cards clock frequency will go down... yes?

Well, obviously Kepler architecture cards will do this as well, yet now it works vice versa as well. If in a game the GPU has room left for some more, it will increase the clock frequency a little and add some extra performance. You could say that the graphics card is maximizing it's available power threshold.

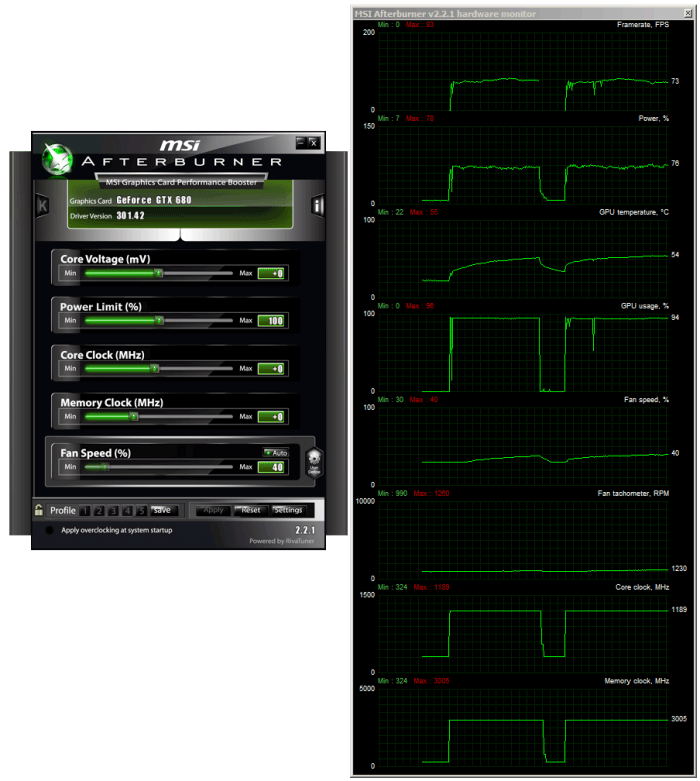

Example of a stress test applied to the GPU, where the dynamic boost clock climbs to even 1190 MHz

Even more simple, DCA resembles Intels Turbo Boost technology a little bit by automatically increasing the graphics core frequency with 5 to 10 percent when the card works bellow its rated TDP. The baseclock of the GTX 680 in 3D mode is 1006 MHz (reference cards), it can now boost towards 1058 MHz when the power envelope allows it to do so. The 'Boost Clock' however is directly related to the maximum board power, so it might clock even higher as longs as the GPU sticks within it's power target. We've seen the GPU run to an excess of even over 1100 MHz for brief periods of time.

Overclocking on that end will work the same as GPU boost will continue to work while overclocking, it stays restricted within the TDP bracket. We'll show you that in out overclocking chapter.

3D Vision surround - 4 displays- Adaptive VSync

PCIe Gen 3

The series 600 cards from NVIDIA all are PCI Express Gen 3 ready. This update provides a 2x faster transfer rate than the previous generation, this delivers capabilities for next generation extreme gaming solutions. So opposed to the current PCI Express slots which are at Gen 2, the PCI Express Gen 3 will have twice the available bandwidth and that is 32GB/s, improved efficiency and compatibility and as such it will offer better performance for current and next gen PCI Express cards.

To make it even more understandable, going from PCIe Gen 2 to Gen 3 doubles the bandwidth available to the add-on cards installed, from 500MB/s per lane to 1GB/s per lane. So a Gen 3 PCI Express x16 slot is capable of offering 16GB/s (or 128Gbit/s) of bandwidth in each direction. That results in 32GB/sec bi-directional bandwidth.

Adaptive Vsync

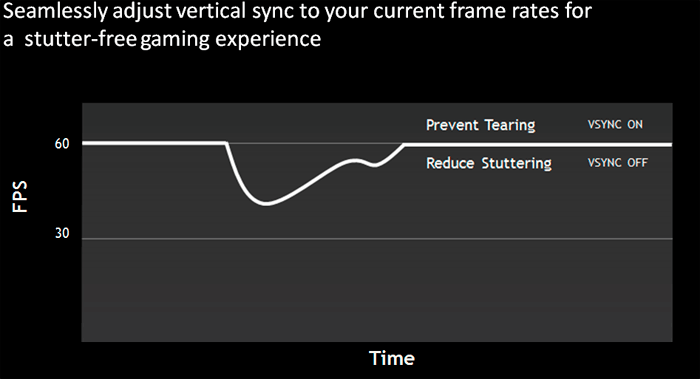

In the process of eliminating screen tearing NVIDIA will now implement adaptive VSYNC in their drivers.

VSync is the synchronization of your graphics card and monitor's abilities to redraw the screen a number of times each second (measured in FPS or Hz). It is an unfortunate fact that if you disable VSync, your graphics card and monitor will inevitably go out of synch. Whenever your FPS exceeds the refresh rate (e.g. 120 FPS on a 60Hz screen), and that causes screen tearing. The precise visual impact of tearing differs depending on just how much your graphics card and monitor go out of sync, but usually the higher your FPS and/or the faster your movements are in a game - such as rapidly turning around - the more noticeable it becomes.

Adaptive VSYNC is going to help you out on this matter. So While normal VSYNC addresses the tearing issue when frame rates are high, VSYNC introduces another problem : stuttering. The stuttering occurs when frame rates drop below 60 frames per second causing VSYNC to revert to 30Hz and other multiples of 60 such as 20 or even 15 Hz.

To deal with this issue NVIDIA has applied a solution called Adaptive VSync. In the series 300 drivers the feature will be integrated, minimizing that stuttering effect. When the frame rate drops below 60 FPS, the adaptive VSYNC technology automatically disables VSYNC allowing frame rates to run at that natural state, effectively reducing stutter. Once framerates return to 60 FPS Adaptive VSync turns VSYNC back on to reduce tearing.

This feature will be optional in the upcoming GeForce series 300 drivers, not a default.

NVENC

NVENC a dedicated graphics encoder engine that incorporates a new hardware based H.264 video encoder inside the GPU. In the past this functionality was managed over the shader processor cores yet with the introduction of Kepler some extra core logic has been added dedicated to this function.

Nothing that new you might think but hardware 1080p encoding is now an option at 4 to 8x in real-time. The supported format here follows the traditional Blu-ray standard which is H.264 high profile 4.1 encoding as well as Multi-view video encoding for stereoscopic encoding. Actually up-to 4096x4096 encode is supported.

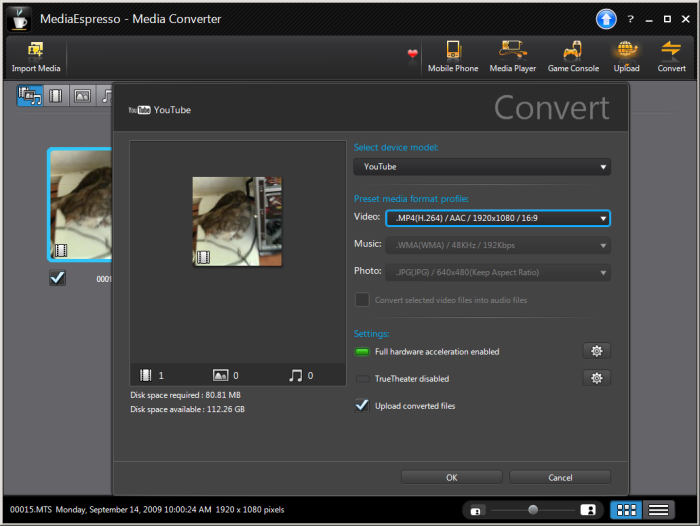

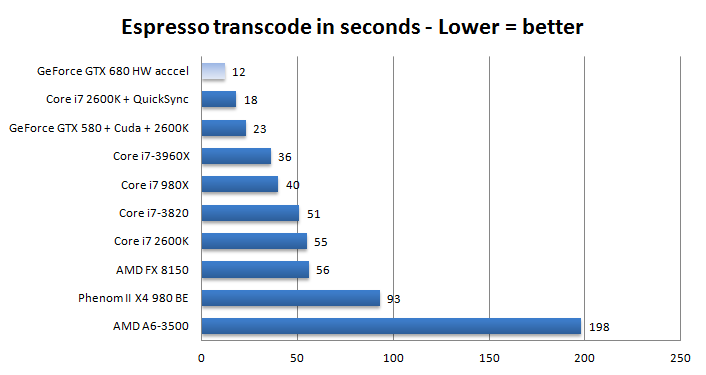

Above you can see Cyberlink Espresso with NVENC enabled and crunching our standard test. We tested and got some results

Now it's not that your processors can't handle this, but the big benefit of encoding over the GPU is saving watts and thus power consumption, big-time. Try to imagine the possibilities here with transcoding, video editing but also think in terms of videoconferencing and heck why not, wireless display technology.

Now above, you can find the results of this test. In this test we transcode a 200 MB AVCHD 1920x1080i media file to MP4 binary (YouTube format). This measurement is in seconds needed for the process, thus lower = better.

This quick chart is an indication as the different software revisions in-between the Espresso software and could differ a tiny bit. The same testing methodology and video files have been used of course. So where a Core i7 3960X takes 36 seconds to transcode the media file (raw over the processor) we can now have the same workload done in 12 seconds, as that was the result with NVENC. Very impressive, it's roughly twice as fast as a GTX 580 with CUDA transcoding and roughly a third faster then a Core i7 2600K with QuickSync enabled as hardware accelerator. The one thing we could not define however... was image quality. So further testing will need to show how we are doing quality wise.

And yes I kept an eye on it -- transcoding over the CPU resulted into a power draw of roughly 300 Watt for the entire PC, and just 190 Watt when run over the GeForce GTX 680 (entire PC measured). So NVENC not only saves heaps of time, it saves on power very much.