I'm not sure why people feel the need to explain that it's "no longer possible" for consoles to have better graphics than a PC". Have a peek at a part of the interview, after the break.

PCPP: In the past, when a new console launched, the graphics were on par, if not even better, than a reasonably well-specced PC of the time. Yet at this year's E3, we noticed that the Xbox One and PS4 demos didn't look as good as the earlier PC demos of the same games. Do you think the lead that consoles had in the past at launch day is over?

Tamasi: It’s no longer possible for a console to be a better or more capable graphics platform than the PC. I’ll tell you why. In the past, certainly with the first PlayStation and PS2, in that era there weren’t really good graphics on the PC. Around the time of the PS2 is when 3D really started coming to the PC, but before that time 3D was the domain of Silicon Graphics and other 3D workstations. Sony, Sega or Nintendo could invest in bringing 3D graphics to a consumer platform. In fact, the PS2 was faster than a PC.

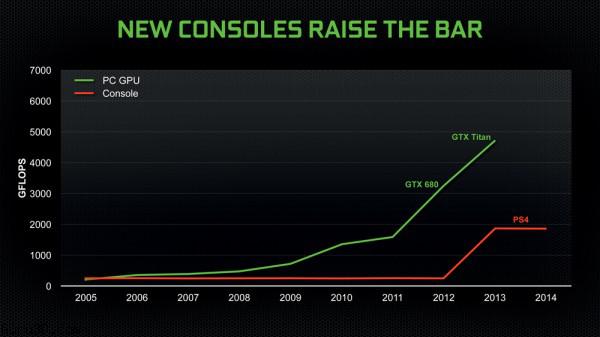

By the time of the Xbox 360 and PS3, the consoles were on par with the PC. If you look inside those boxes, they’re both powered by graphics technology by AMD or NVIDIA, because by that time all the graphics innovation was being done by PC graphics companies. NVIDIA spends 1.5 billion US dollars per year on research and development in graphics, every year, and in the course of a console’s lifecycle we’ll spend over 10 billion dollars into graphics research. Sony and Microsoft simply can’t afford to spend that kind of money. They just don’t have the investment capacity to match the PC guys; we can do it thanks to economy of scale, as we sell hundreds of millions of chips, year after year.

“It’s no longer possible for a console to be a better or more capable graphics platform than the PC”

The second factor is that everything is limited by power these days. If you want to go faster, you need a more efficient design or a bigger power supply. The laws of physics dictate that the amount of performance you’re going to get from graphics is a function of the efficiency of the architecture, and how much power budget you’re willing to give it. The most efficient architectures are from NVIDIA and AMD, and you’re not going to get anything that is significantly more power efficient in a console, as it’s using the same core technology. Yet the consoles have power budgets of only 200 or 300 Watts, so they can put them in the living room, using small fans for cooling, yet run quietly and cool. And that’s always going to be less capable than a PC, where we spend 250W just on the GPU. There’s no way a 200W Xbox is going to be beat a 1000W PC.

But how is this different from the launch of the PlayStation 3 and Xbox 360, where they still had these power limitations but were on par with the PC?

Because at that time, the PC graphics industry wasn’t operating at the limits of device physics and power. If you wind back the clock, a high-end graphics card at that time was maybe 75W or 100W max. We weren’t building chips that were on the most advanced semiconductor process and were billions of transistors. Now we’re building GPUs at the limits of what’s possible with fabrication techniques. Nobody can build anything bigger or more powerful than what is in the PC at the moment. It just is not possible, but that wasn’t the case in the last generation of consoles. Taken to the theoretical limits, the best any console could ever do would be to ship a console that is equal to the best PC at that time. But then a year later it’s going to be slower, and it still wouldn’t be possible due to the power limits.

There’s been a shift here, the R&D budgets required to build the PC’s level of graphics are enormous, there are only a few companies that can do it. The technology that we’re applying to PC graphics is literally state of the art, at the limits of semiconductor technology. That’s why I don’t think it’s possible any more to have a console that can outperform the PC.