Block Diagrams and Specs and new Features

The Turing Block Diagrams

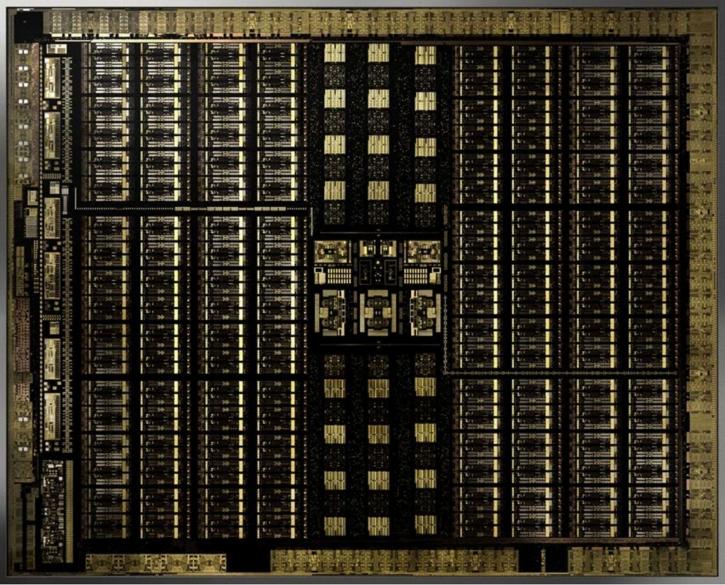

In the chapter of this architecture review, I'd like to chart up and present to you the separated GPU block diagrams. Keep in mind that the specification on the 2080 and 2080 Ti differ slightly compared to the fully enabled GPU, as the GPUs have disabled shader / RT / Tensor partitions. So the block diagrams you see here are based upon the fully enabled GPUs. Only the GeForce RTX 2070 is a fully enabled TU106 chip.

RT cores and hardware-assisted raytracing

NVIDIA is adding 72 RT cores on its full Turing GPU. By utilizing these, developers can apply something that I just referred to as hybrid raytracing. You still are performing your shaded (rasterization) rendering, however, developers can apply real-time raytraced environmental functionality like reflections and refractions of light onto objects. Think of sea, waves, and water reflecting precise and accurate world reflections and lights. You can also think of a fire or explosion, bouncing light off walls and reflecting in the water. It's not just reflections bouncing the right light rays but also the other way around, shadows. Where there's light there should be a shadow, 100% accurate soft shadows can now be computed. It has been really hard in the traditional shading engine to create accurate and proper shadows, this is now also something possible with raytracing. Also, raytraced ambient occlusion and global illumination are something that is going to be a big thing. Never have these things been possible as raytracing is all about achieving realism in your game.

Please watch the above video

Now, I can write up three thousand words and you'd still be confused as to what you can achieve with raytracing in a game scene. Ergo I'd like to invite you to look at the video above that I recorded at a recent NVIDIA event. It's recorded by hand with a smartphone, but even in this quality, you can easily see how impressive the technology is by looking at several use-case examples that can be applied to games. Allow me to rephrase that, try to extrapolate the different RT technologies used, and imagine them in a game, as that is the ultimate goal we're trying to achieve here.

DLSS High-Quality Motion Image generation

Deep Learning Super Sampling - DLSS is a supersampling AA algorithm that uses Tensor core-accelerated neural network inferencing in an effort to create what NVIDIA refers to as high-quality super sampling like anti-aliasing. By itself the GeForce RTX graphics card will offer a good performance increase compared to the last gen counterparts, NVIDIA mentions roughly 50% higher performance based on 4K HDR 60Hz conditions for the faster graphics cards. That obviously excludes real-time raytracing or DLSS, that would be your pure shading performance. Deep learning can now be applied in games as an alternative AA (anti-aliasing) solution. Basically, the shading engine renders your frame, passes it onwards to the Tensor engine, which will super sample and analyze it based on an algorithm (not a filter), apply its AA and pass it back. The new DLSS 2X function will offer TAA quality anti-aliasing at little to no cost as you render your games 'without AA' on the shader engine, however, the frames are passed to the Tensor engines who apply supersampling and perform anti-aliasing at a quality level comparable to TAA, and that means super-sampled AA at very little cost. At launch, a dozen or so games will support Tensor core optimized DLAA, and more titles will follow. Contrary to what many believe it to be, Deep Learning AA is not a simple filter. It is an adaptive algorithm. The setting, in the end, will be available in the NV driver properties with a slider. Here's NVIDIA's explanation; to train the network, they collect thousands of “ground truth” reference images rendered with the gold standard method for perfect image quality, 64x supersampling (64xSS). 64x supersampling means that instead of shading each pixel once, we shade at 64 different offsets within the pixel, and then combine the outputs, producing a resulting image with ideal detail and anti-aliasing quality. We also capture matching raw input images rendered normally. Next, we start training the DLSS network to match the 64xSS output frames, by going through each input, asking DLSS to produce an output, measuring the difference between its output and the 64xSS target, and adjusting the weights in the network based on the differences, through a process called backpropagation. After many iterations, DLSS learns on its own to produce results that closely approximate the quality of 64xSS, while also learning to avoid the problems with blurring, disocclusion, and transparency that affect classical approaches like TAA. In addition to the DLSS capability described above, which is the standard DLSS mode, we provide a second mode, called DLSS 2X. In this case, DLSS input is rendered at the final target resolution and then combined by a larger DLSS network to produce an output image that approaches the level of the 64x super sample rendering - a result that would be impossible to achieve in real time by any traditional means.

Let me also clearly state that it's not a 100% perfect super sampling AA technology, but it's pretty good from what we have seen so far. Considering you run them on the Tensor cores, your shader engine is offloaded. So you're rendering a game with the perf of no AA, as DLSS runs on the Tensor cores. So very short, a normal resolution frame gets outputted in super high-quality AA. 64x super-sampled AA is comparable to DLSS 2x. All done with deep learning and run through the Tensor cores. DLSS is trained based on supersampling.

Some high res comparison shots can be seen here, here and here. Warning: file-sizes are 1 to 2 MB each.