Power Consumption

Power Consumption

Right we installed the cards so let's have a look at how much power draw we measure with these graphics cards active and running.

The methodology: We have a device constantly monitoring the power draw from the PC. We simply stress the GPU, not the processor. The before and after wattage will tell us roughly how much power a graphics card is consuming under load.

Note: there has been a much discussion using FurMark as stress test to measure power load. Furmark is so hard on the GPU that it does not represent an objective power draw compared to really hefty gaming. If we take a very-harsh-on-the-GPU gaming title, then measure power consumption and then compare the very same with Furmark, the power consumption can be 50 to 100W higher on a high-end graphics card solely because of FurMark.

We decided to move away from Furmark in early 2011 and are now using a game like application which stresses the GPU 100% yet is much more representable of power consumption and heat levels coming from the GPU. We however are not disclosing which application that is as we do not want AMD/ATI/NVIDIA to 'optimize & monitor' our stress test whatsoever, for our objective reasons of course.

Our test system is based on a power hungry Core i7 965 / X58 system. This setup is overclocked to 3.75 GHz. Next to that we have energy saving functions disabled for this motherboard and processor (to ensure consistent benchmark results). On average we are using roughly 50 to 100 Watts more than a standard PC due to higher CPU clock settings, water-cooling, additional cold cathode lights etc.

We'll be calculating the GPU power consumption here, not the total PC power consumption.

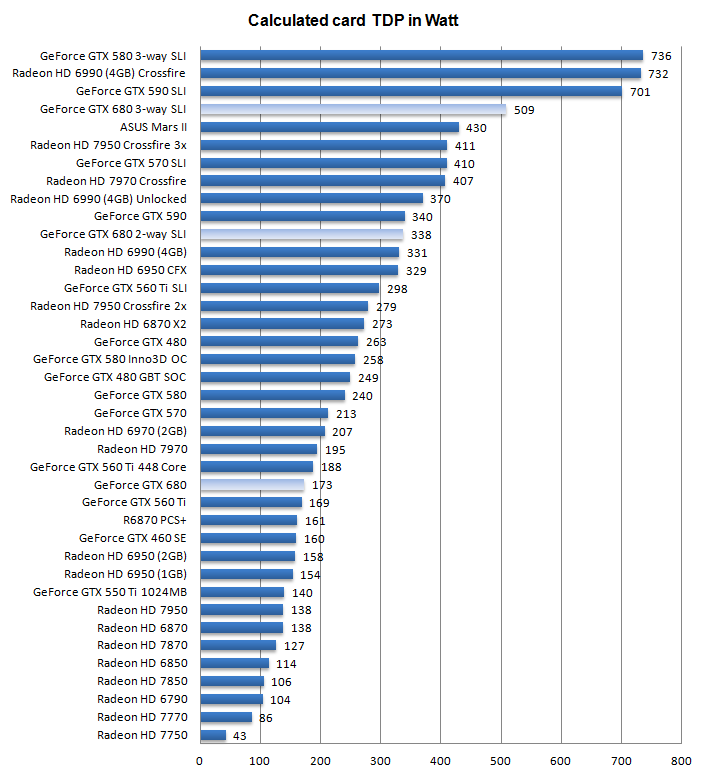

Measured power consumption one card

- System in IDLE = 144W

- System Wattage with GPU in FULL Stress = 307W

- Difference (GPU load) = 163W

- Add average IDLE wattage ~10W

- Subjective obtained GPU power consumption = ~ 173 Watts

Measured power consumption two cards in SLI x2

- System in IDLE = 155W

- System Wattage with GPUs in FULL Stress = 473W

- Difference (GPU load) = 318W

- Add average IDLE wattage ~20W

- Subjective obtained GPU power consumption = ~ 338 Watts

Measured power consumption three cards in SLI x3

- System in IDLE = 170W

- System Wattage with GPUs in FULL Stress = 649W

- Difference (GPU load) = 479W

- Add average IDLE wattage ~30W

- Subjective obtained GPU power consumption = ~ 509 Watts

Mind you that the system wattage is measured at the wall socket side and there are other variables like PSU power efficiency. So this is a calculated value, albeit a very good one.

Above, a chart of relative power consumption. Again the Wattage shown is the card with the GPU(s) stressed 100%, showing only the peak GPU power draw, not the power consumption of the entire PC and not the average gaming power consumption.

Here is Guru3D's power supply recommendation:

- GeForce GTX 680 - On your average system the card requires you to have a 550 Watt power supply unit.

- GeForce GTX 680 2x SLI - On your average system the cards require you to have a 750 Watt power supply unit as minimum.

- GeForce GTX 680 3x SLI - On your average system the cards require you to have a 900 Watt power supply unit as minimum.

Remember, if you are going to overclock the GPUs or processor, then we do recommend you purchase something with some more stamina. The minute you touch voltages on the CPU or GPUs, the power draw can rise real fast and extensively.

There are many good PSUs out there, please do have a look at our many PSU reviews as we have loads of recommended PSUs for you to check out in there. Let's move to the next page where we'll look into GPU heat levels and noise levels coming from this graphics card.